LUCID

PAST

Creating Virtual Dreams from Historical Memory

The Problem Space

Archives as Unexplored Territory

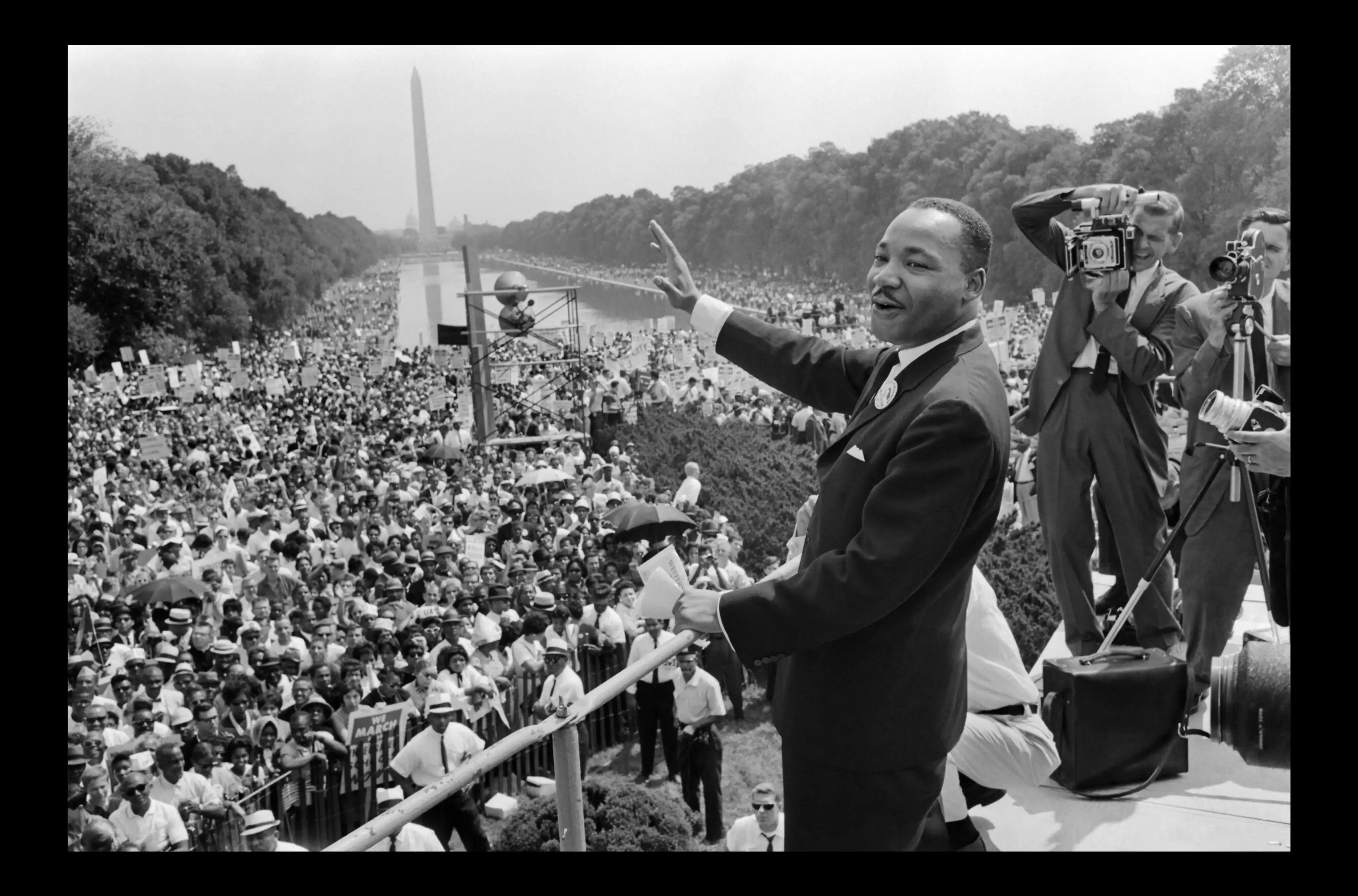

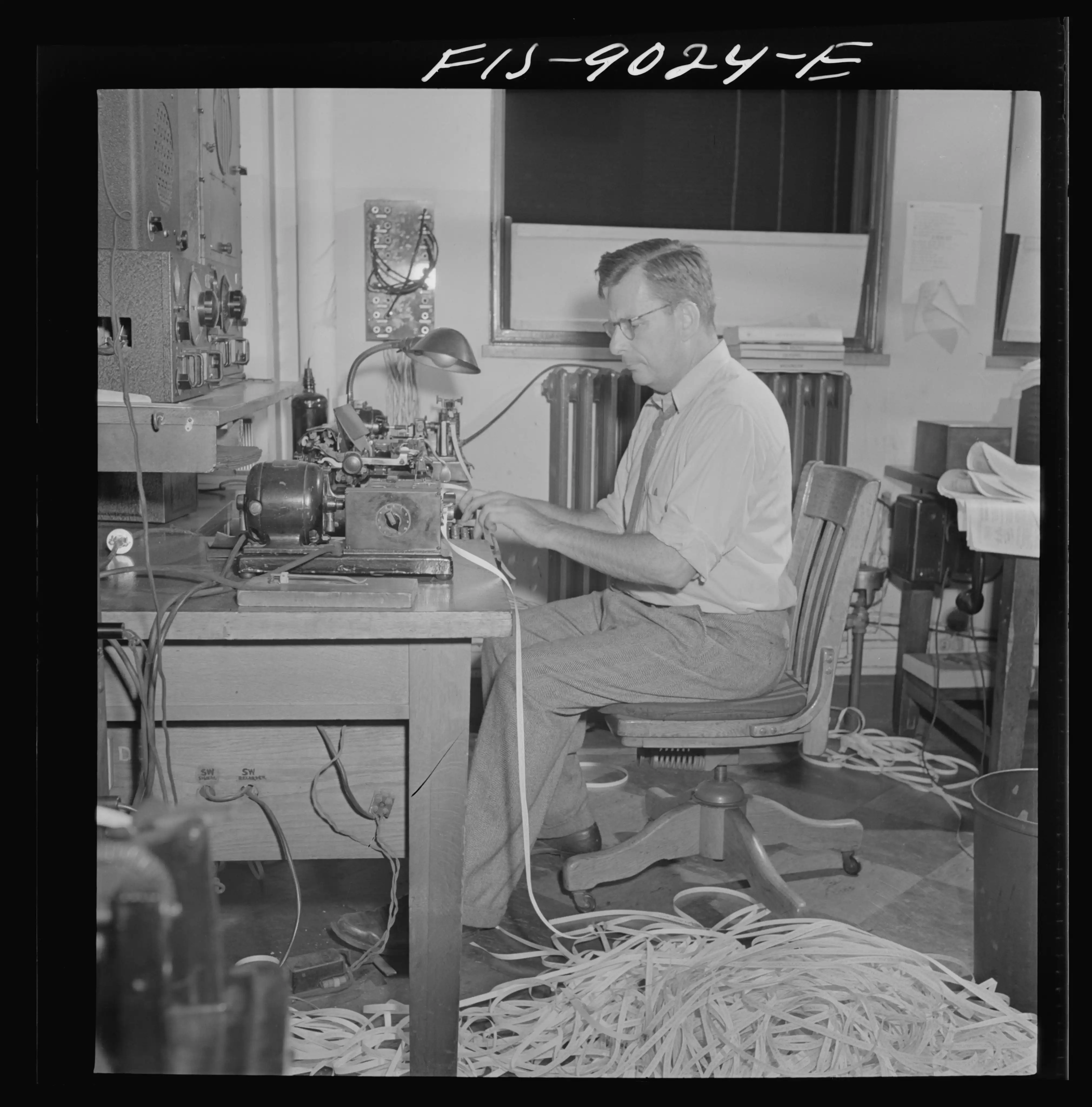

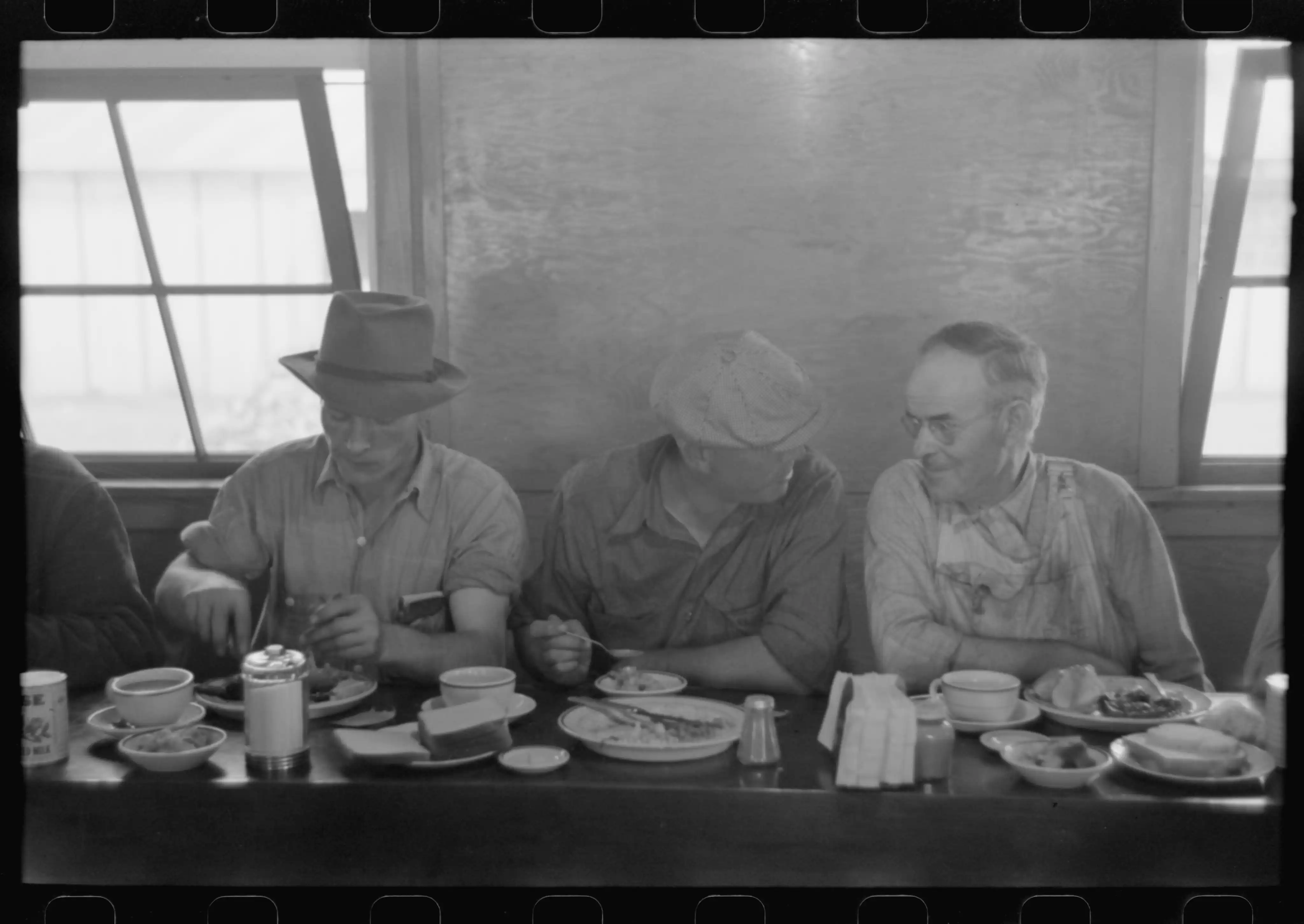

The Library of Congress holds over 16 million images in its Prints and Photographs Division, with around 1.6 million digitized. Museums worldwide have similar collections. Yet most remain locked behind keyword search and chronological browsing - interactions designed for librarians, not storytellers.

XR enables natural, curiosity-driven exploration. But existing museum VR experiences are linear guided tours - just digital versions of audio guides.

Three core issues I identified

Discovery is broken.

Context is rigid.

Engagement is shallow.

The Insight

The brain holds personal memory.

Archives hold collective memory.

Dreams are how we explore one.

LucidPast is how we explore the other.

When you dream, your brain does not present memories in chronological order. It follows associations - a face leads to a place leads to a feeling. The result is not chaos. It is meaning, arrived at sideways.

Archives work the same way. The Library of Congress does not know which photograph will matter to you, or why. Keyword search forces you to already know the answer before you begin. LucidPast replaces the search bar with the dream - letting association, attention, and curiosity do what they have always done best.

Core Innovation

How Dreams Actually Work

I asked: Can we recreate this algorithmically?

The system I designed:

- →

Gaze reveals intent

- →

Intent triggers transitions

- →

Transitions build themes

- →

Themes create meaning

Design System Architecture

I broke the experience into three interconnected subsystems. Each solves a specific design challenge.

Persistent Object Transition

Environmental changes in VR cause disorientation. How do you morph between completely different scenes without losing the user?

The gazed object becomes a spatial anchor - context morphs around it, eliminating disorientation.

Three-Act Progressive Narrowing

Pure random exploration becomes meaningless. Pure curation removes agency. How do you balance both?

Three-act structure applied algorithmically - 100% open for 7 minutes, narrowing to 30% of options by minute 20.

Pathway-Dependent Interpretation

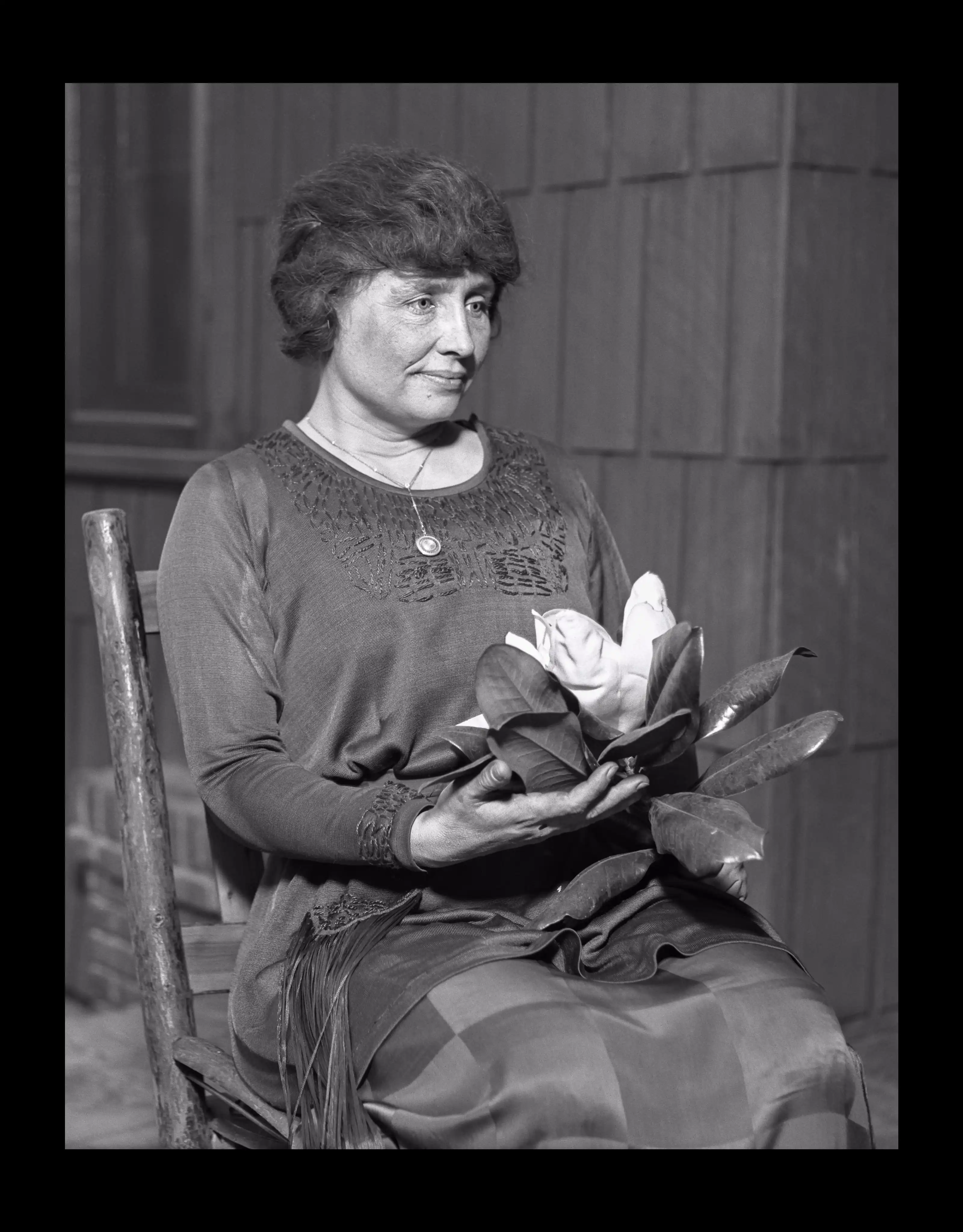

The same photograph can reveal labor history, tech innovation, or cultural change. Institutions usually pick one.

Pathway priming activates different semantic networks - the same photograph means different things depending on how you arrived at it.

Interaction storyboard - 36 panels mapping the full experience flow before implementation.

| Phase | Duration | System Behavior | User Experience |

|---|---|---|---|

| Act 1 | 0-7 min | Observes gaze patterns, 100% open | "I can go anywhere" |

| Act 2 | 7-13 min | Narrows to 40% of options matching detected themes | "This is getting interesting" |

| Act 3 | 13-20 min | Focuses to 30% of options, clear thematic thread | "Oh, this is about..." |

Interaction Design

Gaze as Primary Input

Why gaze? I evaluated five alternatives:

Dwell time calibration validation: Research shows 300-400ms causes accidental activation ("Midas Touch problem"). Apple Vision Pro's Dwell Control (accessibility mode) defaults to 1000ms - I validated this as the right threshold to avoid accidental activation.

Two Interaction Modes

Early concepting revealed a problem: "What if I'm looking at a rendering artifact or examining something out of confusion, not interest?" Solution: Users choose at entry, switch anytime.

Flow Mode

- For maximum immersion

- Pure 1-second gaze → automatic transition

- Minimizes cognitive load

Intentional Mode

- 1-second gaze + pinch gesture confirmation

- Explicit gestures don't break flow state

Technical Implementation

Volumetric Reconstruction: Two Approaches Validated

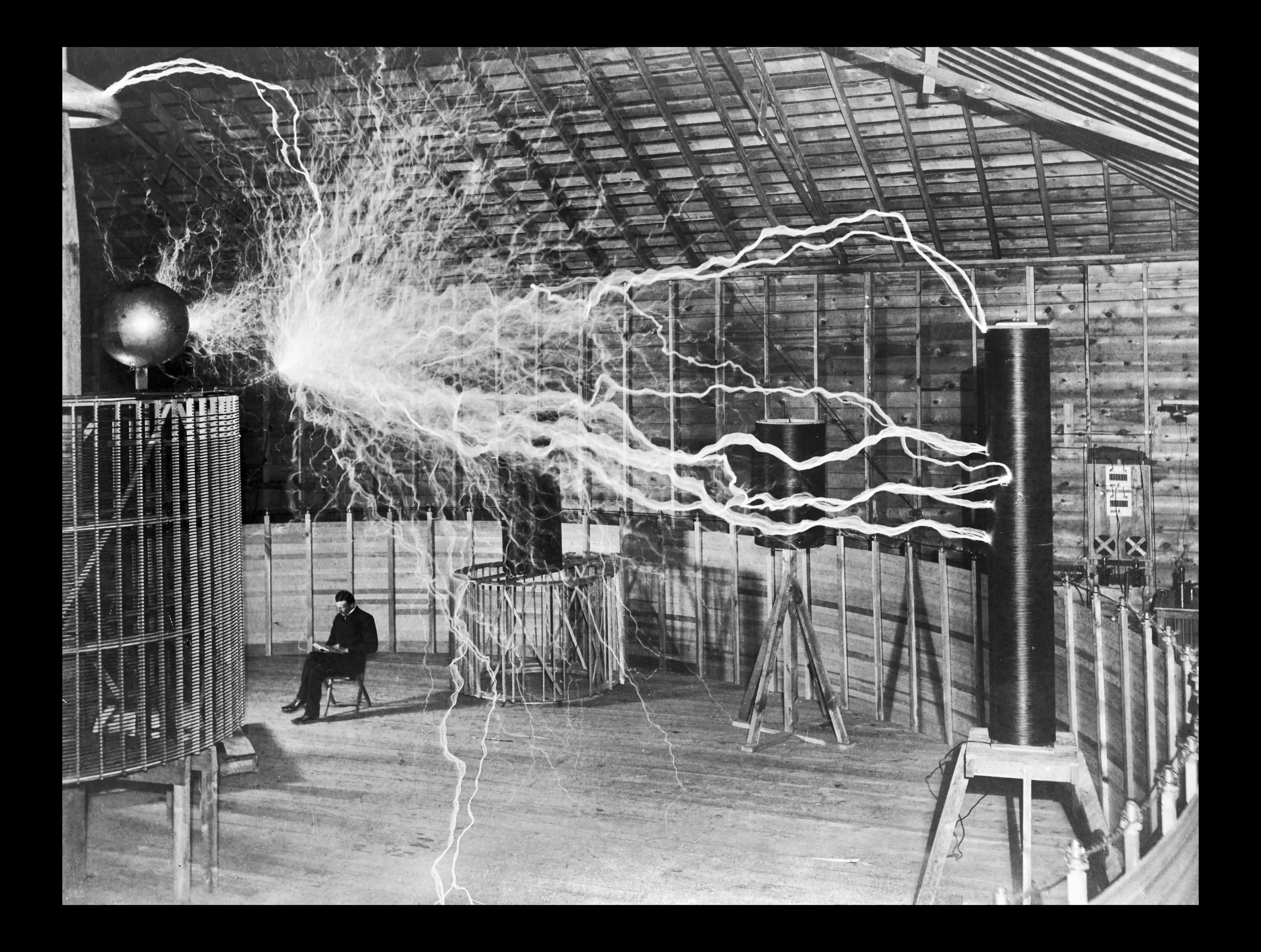

Challenge: Creating explorable 3D environments from single flat photographs without fabricating content beyond what's visible. I validated two fundamentally different pipelines on the same test dataset of 10 diverse archival photographs:

Model Selection - Iterative Validation

Pipeline A — Depth Pro + Mesh

Depth Pro depth estimation

~8s/image

Point cloud generation

~2 min/image

Poisson surface reconstruction

~15 min/image

Texture mapping

~3 min/image

Total:~20 minutes per image

Output:textured polygon mesh (.glb)

Pipeline B — Apple SHARP Splatting

SHARP inference

<1s/image

Total:under 1 second per image

Output:Gaussian Splat (.ply)

Why SHARP produces better results for LucidPast:

Test dataset validated across extreme conditions:

Both pipelines generated convincing 3D across all conditions. SHARP showed measurably superior texture preservation in portrait-heavy content - the dominant image type in institutional archives.

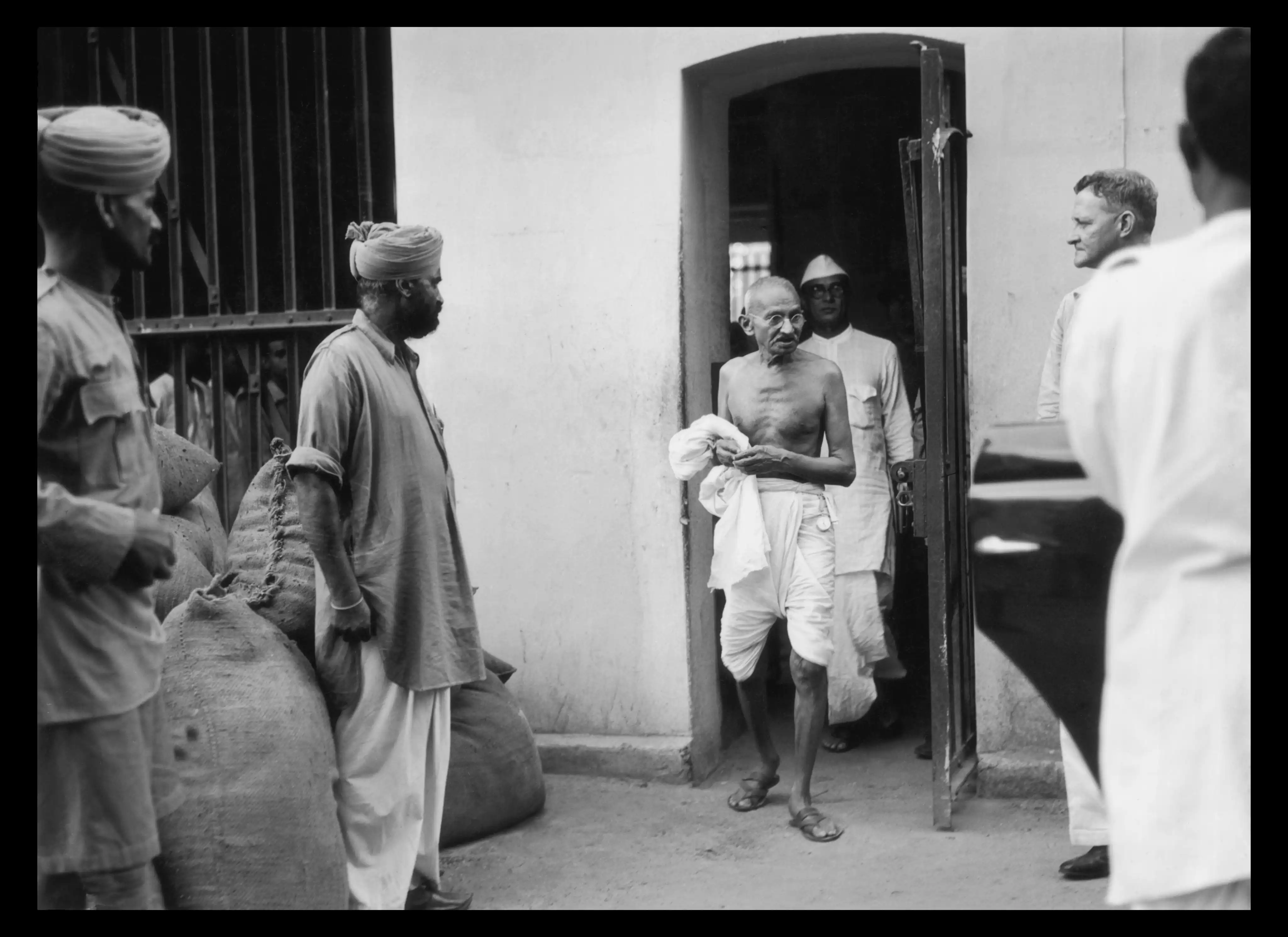

WorldLabs (marble.worldlabs.ai) - Tested

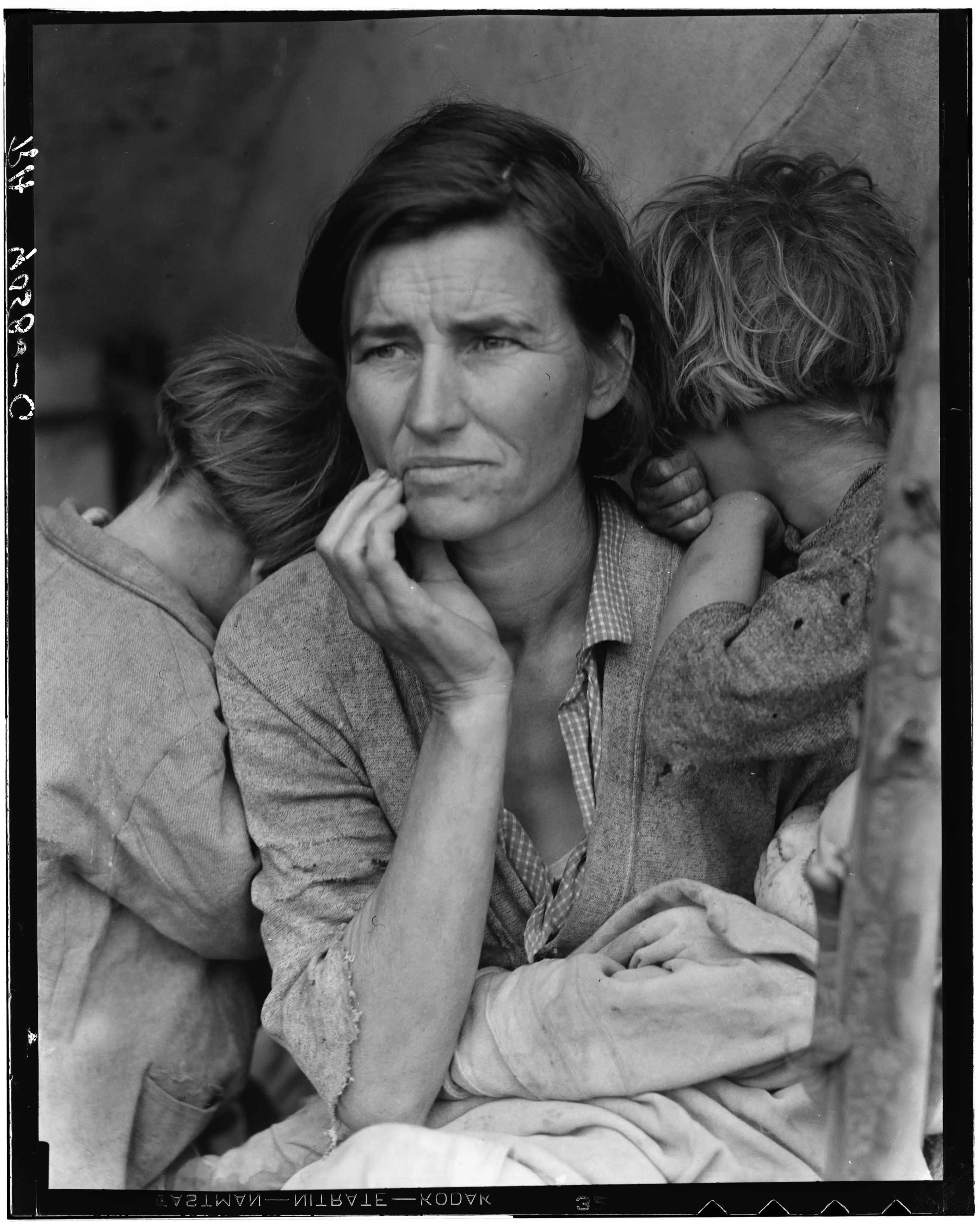

Single-photo to 360-environment conversion. Tested with Migrant Mother - the consistent benchmark image across all pipeline validation. WorldLabs uses AI image generation to approximate and fill invisible areas beyond the camera frame.

Result: Migrant Mother's face was completely altered and unrecognisable. The child was removed entirely. The background was fabricated as open ground with multiple trees - approximated from the partial background visible in the original.

The confirmed constraint

The moment a system generates content beyond the photograph, it loses the historical subject. This is not a technical failure - it is a category error. You are no longer showing the archive. You are showing an AI's interpretation of the archive.

LucidPast limits parallax to visible content only. What the photographer did not capture, the system does not invent.

WorldLabs output - Migrant Mother's face altered beyond recognition, child removed, background fabricated from partial scene data.

The Narrative Sequence

2D Slideshow Validation

I built a functional slideshow with simulated gaze tracking to validate narrative mechanics before investing in XR implementation. Internal testing showed theme emerged clearly by image 7-8 despite no explicit narration.

Emergent theme confirmed by image 7-8 without explicit narration. Pivot point felt surprising yet inevitable - the "dream logic" quality I was targeting.

Key Design Decisions

User Validation & Testing

This section will be completed after conducting user testing with 3-5 participants following Nielsen Norman Group's qualitative testing guidelines. Content will include testing methodology, key findings on thematic emergence rates, narrative coherence ratings, and representative participant quotes.

Outcomes & Reflection

What This Project Validated

Design Hypothesis

Attention-driven sequencing generates emergent themes. Progressive narrowing balances spontaneity with coherence.

Technical Feasibility

Two volumetric pipelines validated. SHARP produces photorealistic splats in <1s, enforcing ethical constraints architecturally.

Interaction Pattern

Persistent object transitions reduce disorientation. Two-mode gaze+gesture system addresses false-positives without breaking flow.

Skills Demonstrated

Interaction Design

Systems Thinking

Technical & Research

What I Learned

Making history feel like memory

Image Sources

- Archival photographs: Library of Congress, Prints and Photographs Division

- Neil Armstrong portrait: NASA (public domain)

- Black Mirror - Eulogy (S07E05): Netflix / House of Tomorrow - used for critical commentary

- Depth map outputs and 3D reconstructions: author's own work